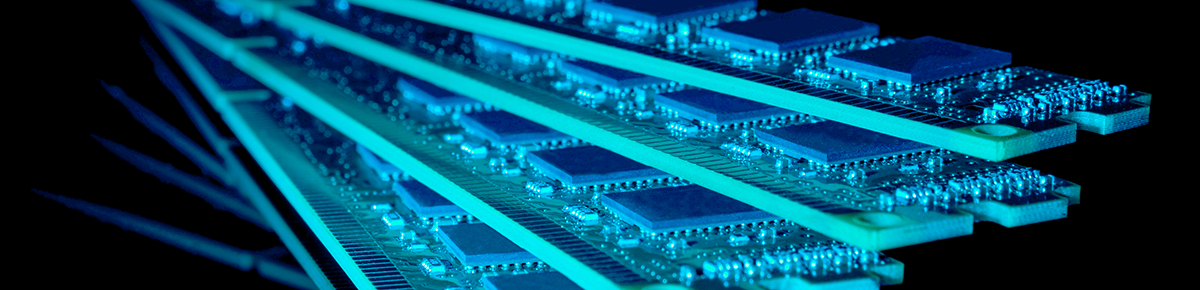

Accelerate your neural networks today!

AutoDL framework for neural network compression & acceleration

2 weeks

neural network compression

hardware cost

reduction

reduction

25 times

70%

up to

up to

up to

20 times

neural network acceleration

to a compressed

model

model

ENOT is a framework with a Python API that can be quickly and easily integrated within various neural network training pipelines

Product

Language

CNN

RNN

LSTM

DNN

Machine Learning Frameworks

Deployment

NN Types

Hardware Libraries

CPU

FGPA

GPU

NPU

Runtime

On-premises

On the cloud

Processing images faster

Client required faster image processing on smartphones for better user experience without sacrificing accuracy.

Case

Smartphone manufacturer

4.8 times

acceleration without accuracy degradation

Reduction of cloud costs

Client was facing high cloud server costs for their facial recognition pipeline, thus their neural networks were accelerated.

Case

Results

3.2 times

reduction in cloud infrastructure costs

Low latency & RAM consumption

Case

Client required to reduce their NN model size to meet their RAM limitations, while maintaining low latency

Results

4.8 Mb

NN model size

9.1Mb

Peak RAM consumption

4 ms

Latency

Meet chipset's RAM limitations

Client required compression of their neural network to meet their chipset's 5MB RAM limitation

Case

Results

Reduction of hardware costs

Case

Client was incurring very high hardware costs from operating object detection on 25 video streams.

Results

4.2 times

Acceleration

2.2 times

Reduction in server costs

8 times

Reduction of peak

RAM consumption

from 36 Mb to 4.5 Mb

RAM consumption

from 36 Mb to 4.5 Mb

Faster facial keypoint detection

Client could not achieve fast enough facial keypoint detection to provide a seamless mobile app experience.

Case

Results

48%

Acceleration of the baseline model on multiple mobile platforms

Results

Telecommunications

Smartphone manufacturer

Electronics manufacturer

AI-based mobile app

Oil & Gas

Electronics

Healthcare

Oil & Gas

Autonomous Driving

Cloud Computing

Telecom

Mobile Apps

Internet of Things

Robotics

ENOT applies these methods simultaneously to achieve the highest compression/acceleration rate without accuracy degradation. It allows to automate the search for the optimal neural network architecture, taking into account latency, RAM and model size constraints for different hardware and software platforms.

Technology

Our neural network architecture search engine allows to automatically find the best possible architecture from millions of available options, taking into account several parameters:

— input resolution

— depth of neural network

— operation type

— activation type

— number of neurons at each layer

— bit width for target hardware platform for NN inference

— depth of neural network

— operation type

— activation type

— number of neurons at each layer

— bit width for target hardware platform for NN inference

NAS

Pruning

Quantization

Distillation

02

01

03

04

Try ENOT

Pricing

Don't compromize on features.

Process locally, deploy anywhere, optimise as many models as you want.

Process locally, deploy anywhere, optimise as many models as you want.

Simultaneous vRAM usage during optimization

<25GB

Number of optimized models

unlimited

Target hardware

any hardware (CPU/GPU)

Deployment on cloud/premises

Data privacy and protection

local storage and processing

available

60$/month

Simultaneous vRAM usage during optimization

<100GB

Number of optimized models

unlimited

Target hardware

any hardware (CPU/GPU)

Deployment on cloud/premises

Data privacy and protection

local storage and processing

available

55$/month

Simultaneous vRAM usage during optimization

>100GB

Number of optimized models

unlimited

Target hardware

any hardware (CPU/GPU)

Deployment on cloud/premises

Data privacy and protection

local storage and processing

available

45$/month

Per GB of vRAM

Per GB of vRAM

Per GB of vRAM

Pricing

Don't compromise on features.

Process locally, deploy anywhere, optimize as many models as you want.

Process locally, deploy anywhere, optimize as many models as you want.

Price per GB of vRAM

60$/month

55$/month

45$/month

Simultaneous vRAM usage during optimization

Number of optimization models

Target hardware

Deployment on Cloud

Deployment on Premises

Inference

<25GB

<100GB

>100GB

unlimited

unlimited

unlimited

any hardware (CPU/GPU)

any hardware (CPU/GPU)

any hardware (CPU/GPU)

local storage and processing

local storage and processing

local storage and processing

the monthly license plan is tied to your machine's total GPU vRAM usage during training.

*

*

even if you stop your subscription,

optimized models are free to use forever

optimized models are free to use forever

Data privacy and protection

popular choice

Our Partners

Chief Executive Officer

Serge Aliamkin

Our Team

Chief technology Officer

Alex Goncharenko

Chief Financial Officer

Michael Berkov

Strategic Partnerships

Vlad Dyubanov

Business Development

Kristians Karlsons

Chief Research Officer

Ivan Oseledets

Do you have any questions? Contact us!

enot.ai

All rights reserved

enot@enot.ai